Transcript

Jen: Dysfunction in scrum teams or in product teams starts at the very beginning. It starts when people don’t agree on the problem that they’re solving, never mind the solution that’s being designed.

Peter: I’m Peter Merholz.

Jesse: And I’m Jesse James Garrett,

And we’re Finding Our Way…

Peter: Navigating the opportunities and challenges of design and design leadership.

Jesse: On today’s show, veteran UX research leader, Jen Cardello of Fidelity Investments, joins us to talk about building teams, building relationships, building credibility, and building the case for human centered practices.

Jesse: Part of the reason why I was interested in talking with you is that, what we’ve heard and what we’ve seen, is that there are a wide variety of answers to the question of where research should sit within an organization. And we’ve heard from folks about research being more strongly aligned with product research, being more strongly aligned with the business side of things, research being, in some cases. completely separate from a design group and almost like a peer or a service to a design group.

And so I’m curious about all of these different approaches, what you’ve seen work and what you’ve seen has created challenges, in terms of just simply from an organizational perspective, where does research belong, and the different answers to that question?

Jen: Those are great questions. I guess it depends on the maturity of the organization and what outcomes it’s looking for. So, if you’re looking for research mainly to provide proof points to shore up a design group that might have a little bit of credibility issue that it’s trying to build, oftentimes you see those UX researchers living in design because they’re trying to structure an ecosystem of tests that generate evidence, that helps the designers stand behind the cases they’re trying to make, for the change they’re trying to see in the product and the experiences.

That can be really uncomfortable because it becomes very apparent very quickly to the product organization, maybe the engineers, maybe leadership, that those researchers, they may be working as hard as they can not to have bias, but purely by the way their function has been put in the organization, it becomes clear what’s happening there. So, In the organization I’m in now, research, all forms of research— which includes my partners in market research, behavioral economics, brand, and advertising research, and customer loyalty—we sit outside of marketing and outside of design. And that is to ensure that those research techniques and our work isn’t weaponized by those organizations. That’s the intent at least.

Peter: So, this is a trend I’ve been seeing, increasingly, for research to be its own group. What I would call a kind of 360-degree group, that incorporates market research, user research, I think you said analytics or data science. Some teams have, customer service and support, or a connection there at least.

I like the idea that you don’t want research to be weaponized by whatever group that it’s part of. And I’m a fan of holism. And so having one research group that has all these different modes of inquiry, methodology, interrogation, makes a lot of sense to me. But how do you avoid, then, research being seen as simply an internal consulting practice that’s not invested in the day-to-day warp and weft of these teams that are trying to deliver new value?

Jen: Yeah, so that’s part of the alignment strategy. We address that with the org design of the research organization. So I have those four peers, market research, behavioral economics, brand and advertising research, customer loyalty. My group is the largest of those and it spreads out over four business units.

We use Spotify-esque language at Fidelity, to talk about the way we’re organized, from an agile perspective. So we have, at the lowest atomic level, I guess, of organization would be the squad. A group of squads is a tribe, a group of tribes is a domain and a business unit has multiple domains.

And what we’ve tried to do is align our research pods, which would be like three or four researchers focused against a domain and a domain could be something as big as, wealth management, or it could be digitization of service. It could be financial wellness. So these are big themes. And we’ll have a small group of researchers working on that theme in that domain.

And that domain might have 50, 60 squads. We’re not embedded at the squad level, there’s not enough of us to do that. We have about a 15 to one ratio of squads to researchers, but that allows us to float at a higher altitude to see where there’s things that we might need to really understand about financial wellness from a right problem-right solution-done right aspect, which is the other piece of it. How do we classify the type of work we’re doing for the teams? We do have specialization, I guess. So it would be aligned to themes of the business and how they think about bringing themselves and representing themselves into the market.

Jesse: It sounds like you have a degree of control over your own research agenda, independent of what the teams are asking of you. It’s one of the things that you see in these organizations where there is this high level of specialization across the teams, is that any issue that doesn’t clearly fall into one of your tiny buckets doesn’t get addressed, But it sounds like you have some latitude to do some more overarching research work that touches a broader range of the experience that your customers are having.

Jen: Yeah, that is true. There’s a balance between proactive and reactive work. In my dream situation, about 80% of the work we’re doing is proactive. It’s work that we have discovered a need to be done because we’re interacting with the business leaders and product owners and the tribe leads, and figuring out what their big initiatives are that they’re going after so that we can lock arms with them and seeing them through right problem, right solution and done right. So it’s this holistic relationship of getting them from that fuzzy state of not knowing where to go and what to focus on and what unmet needs are there, and then starting to ideate and test out solutions and then to fine tune that design. So we’d like to see 80% of our work surfaced and commissioned in that sense.

And then 20% of our capacity is supposed to be reserved for reactive work because you can’t push that away. It’s going to happen. There’s going to be a team that says, “We need to present something. We actually need to get it in front of users.” You know, it’s more of a checkbox type of engagement.

But, you know, researchers can get really depressed if a hundred percent of their life is checkbox engagements, because it doesn’t really feel genuine, that people really want us there. They’re having us there as a CYA. So yeah, the ideal is that 80/20 relationship. It’s not quite there yet, but we can very crisply identify what things we’re working on that are part of those big initiatives tied to the four business units.

Jesse: Are you actively seeking out business sponsors for that work or, if it’s not originating from the teams, how do you make the case to be able to do the work?

Jen: Yeah, so there’s some work that we are actually driving and saying, this is an initiative of insights gathering and harvesting that we think could feed many teams. And we think it’s important to do, versus there’s some big initiative that already has a business sponsor.

And we’re saying, “Hey, we can help you guys. So why don’t we talk about how we might, I might be able to go, take you through an innovation swim lane or through transformation swim lane and show you what that would look like” and have them say, “Yeah, come on in, join the team.” So when we are suggesting things, for example, trying to lay out a landscape of insights by using Jobs theory, maybe even as specifically as outcome-driven-innovation, it is important for us to shop around and find the domains or business units and executives who say, “Yes, I do believe this is important and we should have some researchers working on that.”

So we’ve been able to make a couple things happen that way, particularly when we’re talking about jobs and, we call it the Atlas, which is, basically, providing a landscape to look across from a segment and jobs perspective in order to plot insights that we know, and places where there’s white space, where we really don’t know, and we could do research in those areas.

So the Atlas is a very meta thing. We had to go and get sponsorship to even do the research around creating the Atlas. Hopefully the Atlas itself will provide us with that mechanism to point to white space and say, “Hey leadership, wouldn’t it be great if we actually could turn that box green, because we knew things about that.”

There may be opportunities living in there that we haven’t surfaced before.

Peter: The map is the territory. When you’re saying “jobs,” I believe you’re referring to jobs-to-be-done jobs, and I know that…And it sounds like you’re continuing to have success with the Jobs-to-be-done framework. I’m probably going to misrepresent Jared Spool, but he’s been a bit of a jobs-to-be-done naysayer, not that he thinks there’s anything wrong with it, but he just thinks it’s old wine in new bottles. Nothing about jobs-to-be-done that hadn’t already been practiced by good user researchers in the past. I’m wondering what you’re seeing in terms of jobs-to-be-done as a framework, as interface and interpretation layer between the work of research and others, that has been particularly helpful.

Jen: There are numerous reasons based on different company pathologies, where jobs has been helpful. Outcome-driven-innovation was useful at Athena because the product owners were really struggling to establish ownership over prioritization. They were very vulnerable to having their prioritization of feature functionality and value creation, being completely upended by leadership at any given moment.

And so having something quantitative to point at, to identify where there were unmet needs, that presented opportunities for us to go after, gave them much stronger standing in and have that conviction about the prioritization they had picked. And it’s forged a very strong partnership between strategic design and product at Fidelity.

Jesse: You’ve talked a bit about the use of quantitative methods as a way of forging these relationships and strengthening the case for the business value of various initiatives, but also of research itself. And I wonder where the qualitative fits into this.

Tell me about the insights part of the equation and how’s that going in terms of making the case for people to listen to what you’re coming up with.

Jen: Yes, absolutely. So there’s the framework that we use: Right problem, right solution, done right. And right problem, we have this model, it doesn’t always get followed exactly, but it’s a model that we look to as a north star, and it’s a qual-quant-qual sandwich.

And the qual in the beginning, the first phase of qual is really starting to understand where there may be openings of opportunity. People think something’s very important, but they’re not very satisfied with this thing. So we’re trying to flesh out a job map and areas of opportunity by doing that really deep high-quality qualitative work.

We can create a job map, which would take us into a quantitative phase of running ODI or jobs-to-be-done work. So that instrument that’s generated is really well-informed and people have really listened and understood the stories of the people that we’re talking about. But that’s not the end. It’s not that you just get the data from jobs-to-be-done.

And then all of a sudden, you know what you need to go build. It’s surfacing outcome statements, which essentially are unmet needs. And then we’re going to go back in and do more qual, because we want to understand the root cause behind those things bubbling up in the opportunity scores. We really want to get at, like, where is this thing broken? Where’s the friction? So that in Right Problem is very important that you have qual bookends.

And then in Right Solution, we’ve been getting much more specific in how we utilize qual and quant, not just in UXR, but also with our market research partners to build a very strong approach to encouraging divergent thinking. Screening many ideas through quant, doing qualitative resonance testing, very thick data, high-value, qualitative interviews. And then once we’re narrowed down on some concepts, then doing market potential assessment, which is way more rigorous and way more quantitative. So, we’re increasing amount of rigor depending on the uncertainty and risk that we are facing, but we’re still having that healthy idea harvesting and idea generation that we need to see teams engaging in so that they have a higher likelihood of success.

So we’re mixing qual and quant, and we’re also partnering very intensely with the other research disciplines to do that properly.

Jesse: I’m curious about that relationship because that’s not really one that I had considered, the relationship that you might have with other research functions in the organization, which might have very different cultures of research and ways of thinking about how you tackle these kinds of problems, how’s that going in terms of the push and pull and striking a balance with your other research partners. I have worked with some market researchers that had a hard time understanding product research. Like they did not have mindset for it. And maybe the culture of your organization is different from that.

Jen: It was new to me when I came to the organization because at previous places we didn’t have strong and developed mature market research practices. So building that relationship has been a great deal Of the effort. We’ve put a lot of effort into that understanding where there’s give and take, where there’s things that they can own entirely where things that UXR can own entirely.

And where is it great for us to partner? one of our great achievements is saying like, we know how to get through right. Solution together. Yeah. It’s very exciting. but yeah, I have been learning so much about market research that I did not know. It was very enlightening to me and very humbling because I just didn’t understand, all of the techniques, like, you know, when people start talking about monadic concept tests and, Volume metrics.

I was like, aha. You know, nodding my head and then, you know, quickly Googling things. It’s just intense, in large field with very specific techniques that have been, very well honed over the years. So It’s becoming more and more of a thing that UX researchers really should understand.

And if you’re building a research org from scratch, you want market researchers on your team. The quantitative work alone is incredibly intense and valuable. But then also they have qualitative techniques and they have honed qualitative techniques that may be slightly different than the way we come at it in UXR.

So I’ve been learning a lot and they’ve been learning a lot from us. The other really important partnership, though, has been the behavioral economics group, which–wow. That is like an absolute, superpower, being able to carve out experiments from a behavioral economics perspective to test certain hypotheses and experience strategies is just absolutely mind-boggling. I have been really impressed with that partnership and whenever we have the opportunity we’re embedding our UX researchers in those projects so that they can learn.

Peter: Did this research team exist as it is before you joined, and you joined to lead part of it, or did it assemble Avengers-style once you came on the scene and there was the right, I dunno, mix of leaders and functions? What was that insight in creating this independent research team?

Jen: So, prior to my arrival, UX Research had lived in UX Design and when the first business unit went through the agile transformation process, they moved it. They moved UX research into this research and insights organization. So, when I joined the UXR org had already lived for six months in that research and insights organization.

Peter: Got it. Interesting. That’s possibly the only good thing to have ever come out of an agile transformation.

Jen: it is, it really is. And beyond my four peer groups, we live in the data organization. So we live with analytics, with AI, with measurement. Usually when you’re thinking about, like, we need data around such and such, or I need metrics, the first thing you have to figure out is how to harvest that information, how to create a system to collect that data and then to analyze it.

But more times than not that data already exists. It’s a matter of finding it and then packaging it up in the way that we need to. But because we live in that org, a superpower we have had to develop is to know what are all the types of data that we have, who are all the people that own it and manage it and play with it? How do we get access to it? So it’s definitely like a kid in a candy shop situation, when you don’t have to actually be creating all the instruments to collect the data, but you do have to get really good at networking and knowing all the players.

Music break 1

Peter: So one of the challenges a lot of design leaders face, including a company I’m working with right now, is the reduction of design to that which can move measurement. “We’re going to do AB testing, and the best design is the one that succeeds based on whatever measurement we had decided was what we were going for.”

And when that happens, when design gets reduced to moving needles, there is a qualitative, experiential component that gets lost, that I think we all recognize the value of, but it’s hard to measure. And, I’m wondering if this set-up, UXR within a data team with a lot of quantitative researchers, that happens to the qualitative research that you’re working on, because it’s looser, more nebulous, more amorphous, less easy to reduce. How you protect, you mentioned thick data, that richness of that thick data, in the possible onslaught of metrics and numbers that others are wielding?

Jen: I’m working on a project right now that is an experience transformation project. And we’ve been partnered. We created a virtual squad between all the quants and the qual researchers. So that, we could approach building a holistic measurement strategy. And one of the things that we did was, we set timeframes around measurement of the metrics.

Like when should we be able to measure this change? Because one of the things I think is very dangerous, is looking for those short-term gains like the needle moving, ‘cause I put something in the market two weeks ago. That’s really dangerous. And so building up that it’s a roadmap of measurement to see, like, we probably won’t see this needle move for another year, just to set expectations.

So don’t ask me to be measuring that next month because we don’t expect it to move, but we do expect these things to happen. One of the interesting things that I’ve seen, this is Teresa Torres and Hope Gurion who teach product management, they had this really nice webinar where they talked about three levels of metrics and they had these traction metrics, which is, “I got the person to click on the thing.” They had product metrics, which is, like, something about adoption engagement, like actually using the thing to get something done. And then business metrics, which would be those way bigger needles to move, which are like satisfaction and NPS, operating income, new money, those types of things.

And being able to really identify when should you see those things and how are they actually correlated as leading indicators of lagging outcomes? We spent, at least two months working on creating that model of how we should measure this transformation and experience.

That’s the type of attention that we need to pay. And one of our partners is the AB testing team and they’re all in on that because they don’t want to be setting up these little itty-bitty AB tests that are supposed to be showing big change, and they’re basically not detecting any statistical difference. They want to be called in when it’s significant enough to make it worth their while to work on that. So we are building these measurement models and we are using very specific words. So just a couple of weeks ago I was correcting people. They were talking about a beta test and I said, “I don’t want to use the word test here. This isn’t a test.” What we’re trying to do is collect and harvest feedback that the creators of this experience can use to fine tune the design. This is not a test. This isn’t go/no-go. It’s not, it’s good, it’s bad. It’s actually a mechanism for generating useful, articulate, guidance that we can use to make this thing better.

Jesse: I’m struck by what seems like the breadth of your mandate, which feels unusual to me, maybe not that unusual for an organization of your scale, but it still feels like it is more common for me to talk to research managers where research is really kind of boxed in to delivering a specific kind of data or insight back to the organization, and the organization is not really interested in hearing about anything that is outside that box that was whatever the box that was originally established for them. Or sometimes what happens is that a research organization will be established with a broad mandate and then that mandate will get whittled down over time to the most provable forms of research, and those are the only ones that the organization is willing to continue to fund. From the perspective of a research manager who is in that situation, how do I start trying to create some more space, to try some new things, to take some chances with what I’m doing with the research that push beyond those expectations that I’ve been boxed into.

Jen: Well, there is some things that we do as researchers that are that basic kit of parts. Take evaluative research, for example, like someone shows up to you and says, “I want to usability test.” That’s great. That’s good work. That’s interesting. But there’s a point at which, in an organization, you’ve been doing usability tests for 20 years. Could we teach some other people to do that? And if we were able to give them that capability with that, help them learn faster and would that free up some of our capacity to do those other things, like to do more Right Problem and Right Solution work. And then could we get some of that Right Problem, Right Solution work and have some wins that we can show the organization, look, this is really interesting stuff on this team. We partnered with them on right problem.

We were able to unsurface these opportunities and they were able to take that into co-creation and be hugely productive and put something in market. So you want to make space for your team so that they’re not on a hamster wheel. And you do that by multiplying their capability by giving some of it away.

And then you want to find partners, either design leaders or product leaders, who are interested in that thick data and interested in that more intense collaborative, and you lock arms and you find opportunities to show some wins. So that’s basic playbook. And I know that every organization is different, so making that happen can be difficult in some organizations that may be very adamant about their perspective as research as a lobster tank or, you know, just a shared service that they get tests from. But like I said, that word “tests” is what sets you up down that path, too. So, choosing different vocabulary to display or explain the value you can have is even a little baby step, but shifting that vocabulary can help a great deal too.

Peter: The lobster tanks suggests a certain New England frame of reference that perhaps you’re operating within. I’m also wondering if there’s something about the culture of the product or organization that you can attribute to the broader mandate that you’re realizing. What is it about Fidelity that has created this space, this opportunity for research to have this far broader than typical mandate.

Jen: Well, this was an organization that was going through a transformation, and was fully invested in change. Like change is hard, but we have to go start setting down this path to do this. And so they already in that growth mindset and wanting to do things differently than they had done them in the past.

So I’m lucky, my timing is lucky, right? Because I show up and they’re like, “We want change. Can you give us change?” And I’m like, well, if you shaped it like this, that’s dramatically different. It gets you insights versus tests versus just strictly validation. So how can we mold ourselves, create an organization that makes that happen?

So they have an appetite for change, and they’re willing to be a little uncomfortable with that for awhile. So that helps. And then the other thing is, this organization is so obsessed with learning. We actually have learning days. Every Tuesday is a learning day. They’re really adamant about people building their T-shaped skillsets.

And they’re adamant about career mobility as well. So when we propose the idea of research democratization, we didn’t have a bunch of people saying, “Uh, not my job. I don’t want to do that.” We had people queuing up. We had a backlog, we had a line, a waiting list that was months long for people to get into the program, to learn how to run evaluative studies on their own.

And then the way we were able to frame that was by talking about a measurement called learning velocity that we invented, saying, how fast is your team able to learn? If you get in our backlog, you could be waiting a month before you get some insights from that little test you want it to run, but if you can do it yourself, you can do it in three days.

And so the whole idea of like, ooh, more learning, more insights, this is a culture that is hungry for that all the time. And they’re hungry for growing their skill sets. So it’s, like, that perfect storm, but in a very positive way,

Peter: Fidelity is a large mature private company, right? So you’re not dealing with the public markets. You’re not dealing with quarterly earnings. You’re not dealing with that hamster wheel that I think so many people face and which narrows or limits the focus, I think is probably a better way to think about it.

Jen: Yeah, you end up with a lot of short, short-term focused initiatives that are looking for a fast hit oftentimes in public-held companies. In a privately held company, there is patience and persistence.

So you think of investor mindset. We are the perfect company to talk about investor mindset. It’s basically how we’ve stayed in business for 75 years. That and exceptional customer service and relationships that we build with our customers. So we’re willing to make bets on things that may not bear fruit for a year, five years, 10 years. That’s okay with a private company.

Peter: You’ve used, it’s almost a mantra, Right Problem, Right Solution, Done Right. Was this something that existed before you joined, is this something you helped generate? Is this something you used in the past?

Jen: I brought it with me and, as I’ve mentioned, in other talks that I’ve given, it’s standing on the shoulders of giants because it’s inspired by double diamond and inspired by, “build the right thing, build the thing right,” in my work with the amazing team at Athena, too.

But it was a really nice way for me to frame conversations with folks about what it was that was valuable to them and, how we, as researchers, could help them. And it had that effect of having people question whether they actually had a right problem that they were going after.

So it started to, like, probe in the direction of teams starting to have that self-awareness they feel very adamant about having that right problem defined. So it is kind of like a rallying cry for people now. And it’s very common to hear product owners and other participants in the process who aren’t researchers talking about Right Problem, Right Solution, Done Right.

Peter: You mentioned double diamond. if I understand it right, the Right Problem would be almost this diamond zero before double diamond. Is that fair or…?

Jen: Yeah, I think that’s very fair. Yeah, I mean that right problem and diamond zero, it’s one of the most important aspects of ensuring team functionality. So, like, dysfunction in scrum teams or in product teams starts at the very beginning. It starts when people don’t agree on the problem that they’re solving, never mind the solution that’s being designed.

And so you want to get really crisp, and be super articulate about that problem that we’re solving and who we’re solving for, and falling in love with that problem, because once the team moves into right solution, people feel super energized and capable, and they have agency for creating many solutions, which we know increases their likelihood of success when they put a product in market.

Music break 2

Peter: Research, done right, doesn’t fit the shape of business. So if you’ve got these domains that you referred to, people don’t neatly fit in these domains. They are likely crossing domains. And I’m wondering how research works along those journeys, and doesn’t fall into silos of the domains, and if the Atlas is a means by which you don’t get stuck, or are able to realize the fullness and richness and messiness and weirdness of your customers’ lives.

Jen: Yeah. That’s exactly what the purpose of the Atlas is. And the Atlas has two altitudes. So there’s jobs, which are really big things that are solution-agnostic, like “assess my financial situation” or, “help me transition to retirement.” Those are things that aren’t about the actions you’re taking in an environment. I’m not opening an account. I’m not, you know, transferring money, right? But those things are important, too. We call those tasks and we have an Atlas of those as well.

And the reason that the Atlas will be useful for us there, is we do find that with those tasks, those are sometimes slivers of an entire journey. And you could have 20 teams all working on a version or a piece of that task. And we’re trying to reconcile that and make sure that where there’s research that might be happening in pockets of the organization that are all focused around that task, that we’re backing up a little bit, making everyone aware of each other.

And saying like, “How can we do a body of research that serves all of you versus 20 discrete research studies?” And so awareness is part of the problem with that. And the Atlas helps us inventory, basically, who’s working on what, and it helps us address that patchy collection of research studies.

So bespoke, discrete research studies are the foundation of your insights, and starting to build structure. So imagine a grid sitting over that, and you’ve got these little patchworks, we’re starting to say there’s places on the grid, there’s longitude and latitude, so that we can start to say, “What do we already know with the bespoke and discrete research studies now, how do we structure this going forward?”

So it informs many squads and many tribes and many domains. It becomes a body of knowledge that is more universally useful versus being commissioned by one team for their purpose, and then never used by anyone else ever again.

Jesse: It sounds like an information architecture job.

Jen: It totally is like, yeah. I agree. You know, thinking about how to structure your insights. We all do it bottoms up, the first thing people do is they go into an org and they’re like, I’m going to build a repository. I’m going to dump all these things in and then I’m going to make it searchable. And I’m going to use AI and that’s going to fix everything. But we’re taking it from the wrong angle. Oftentimes that bottoms up collection of discrete research studies, they don’t click together. They are not cumulative. They don’t create a living dynamic body of knowledge because we haven’t structured it to do that at all.

Jesse: Right. Well, as with any other Enterprise IA challenge, it often becomes a matter of divergent goals leading to divergent viewpoints, and those divergent viewpoints then becoming encoded in the structures by which everybody understands the strategy going forward.

Jen: Yeah, it’s a balance. You have to figure out like, how do I structure this, so it’s somewhat agnostic to who we are and the way we deliver things now. So jobs is a way to think of that.

Jesse: Hmm, it’s interesting. I’ve never thought of it this way before, but I can see through this lens, jobs-to-be-done as a tool for defining the information architecture of a product offering.

Jen: Absolutely, It could be used for that. And the great thing about ODI in particular, not to be overly dogmatic, It’s not the only jobs theory. but I’m fond of it because it does have these two distinctions of core functional jobs. So it’s very high-level jobs and then consumption chain jobs, which we call tasks, but they could be other things. They could be journeys. There could be other types of experiences, but it gives you that leveling, so you can work at both ends of the spectrum.

Jesse: You mentioned your partnerships with the market research folks and the behavioral economics folks as things that were really invigorating for you because they had expertise and methodologies that expanded your world. How would you like to see the work of UX research evolve and expand going forward?

Jen: Hm, that’s a tough question.

I would like UX researchers to gain some of those skills. But one of the things that we’ve talked about, you know, how in design land, we talked about the T-shape and, not necessarily creating generalists, but being able to cover more of the T-shape in knowledge of expanse of things you could know as a designer and a design specialist, and then getting deep in some of those areas, the way we’re talking about that, because we don’t live in Design is, do we have a T-shape for a researcher that spans across user experience research and market research and behavioral economics and loyalty and advertising and marketing? Do we start to create generalists in that vein? So, that’s one thing that I am particularly interested in, is building out skill sets of researchers so that they can learn more of techniques from those other research disciplines.

I think that’s really important, because we’re not always going to be in a situation where there’s low risk and low uncertainty, and we can go talk to five people and then launch a product. And in many cases, if you’re at an existing company, you have lots of revenue at stake, and there’s a history, and many customers whose experiences could be upended by you making changes. And so we really do need to know a lot more about the rigor that goes into traditional research techniques. We can’t test everything in market. Which is also another area that I would love for UX researchers to understand.

And I know at a lot of startups, they do understand A/B testing. But because we can’t test everything in market, how can we build better systems to set norms and predictions and understand correlation? So some of the skillsets I’d love to see are, more quant, more understanding of stats. Yeah, you don’t have to be a statistician, but know what people are talking about and understand when you should call the expert. I think that would be wonderful to see as far as maturity in the field.

Peter: There’s been clearly parallels within design getting reduced to, at least in digital contexts, visual design and interaction design, and screens. And research has also been reduced by companies. There’s so much that it could be, but research has been thought of as interviews and maybe some analysis and we’ll call it a day. And I think what you’re pointing out is that this can be a field or an industry, a practice as varied and rich as design, as software engineering, as any number of other things. But the companies we work with tend to see research in a very limited mode.

You talk about skills building, and you’re the lead of a team. You probably have research leaders within your organization. And I’m wondering how you approach UX research leadership. And what are those skills that, as you’re looking at building the capabilities of the people on your team, what are skills that you’re paying attention to?

And what are you focused on in terms of growing the leaders within your organization?

Jen: So last year was a big growth year for us because we added that management layer in. Previous to that, UX research was more of a bunch of Lone Rangers out roaming the halls, finding squads to do work for, and we were building out the teams. So, looking for some leaders who had very specific qualities, but also things that might be a little bit more nuanced than just a skillset.

So, what we work on is, first of all, that growth mindset of coming in and saying, “I’m going to learn things here. I don’t know all the answers yet. I’m probably going to be partnered with people that I’m going to learn a great deal from.” And then also having a multiplier mindset. it can be career limiting. She’d be very territorial about what work you should be doing and what work others should not touch because it’s yours.

So we work in a very collaborative way and we encourage the democratization of research program. Ee encourage involvement with that, even if you’re not one of the instructors, you could be a buddy, you could be a coach.

You can help with that program growing because it does have such huge dividends for us. And then, some of the other things are, being really well aware of how product management works. A lot of the books that I give my team to read are product management books, they’re not so much design books, although it’s kind of expected that they would understand the design process, but I do want them to understand what good solid product management looks like, because the product owners, the squad leads, and the tribe leads are the ones that most need them.

So if we can help them see things in a way that’s helpful to get them to move forward with product initiatives and move their projects forward, then those are great friends that we want to have forever. So I want them to be able to speak their language. So that’s something that we focus on.

And, that idea that I mentioned earlier, career mobility. They’re not just research leaders, they’re leaders, and they should be able to have mobility in the organization. So for example, one of my research leaders I brought in last year just went over to the design team. So she’s going to be working on Russ Wilson’s design team now, which is awesome. I love seeing when researchers and designers move across the organizations and also move over to product because there’s nothing better than a product owner who’s been in design or research. They’re excellent partners.

So I wanna make sure that they understand that they have a personal brand. And so we’ve been talking about like, What are you known for? What do you want to be known for? What projects can we attach you to so that you can better illustrate that story? What’s your narrative? So working on that is very important to me because they’re not always gonna work for me. I might be working for them someday. they might move to a different role in Fidelity, but I want them to have a very strong identity, not just that they’re part of my team.

Peter: So at fidelity, you’ve found yourself not only leading this research team, you’re connected to other researchers, people with deep experience, really rigorous experience. One of the challenges that I’ve seen with research is that it can vacillate between one of two poles.

It’s either a little too informal, “Let’s just talk to a few users, call it a day.” Or what I’ve often seen is, people with PhDs in some form of psychology or, you know, ergonomics or whatever, who are like, “The only research that is acceptable is ones that are run by people with PhDs who understand all the highly detailed realities of doing research quote the right way, unquote.”

And I’m wondering how you navigate that, where there’s this desire to get your product teams and others to be doing research, but there’s also professionals who know how to do it, quote unquote the right way, and what are the judgment calls there around the practice of research, and how to make sure the PhDs don’t get their knickers in a twist that someone’s talking to five people in some informal way, but how also not to get bogged down by rigorous research all the time.

Jen: So we have something called learning agenda that we create with product owners, squad leads, tribe leads, that are trying to learn all the things. We come together as a team. UXR and market research and behavioral economics, if they’re involved, if they think they can help.

And we create an integrated learning agenda, what do we want to learn? And then we talk about how could we learn those things with what techniques and who’s going to take what? And so you’ll actually see, we have these nice little plans of like phase one, phase two, phase three, they’re all still sitting in Right Problem. But it’s like, who’s taking what? And then how are we orchestrating the readouts so that we’re either doing them together or they’re in an order of operations that tells the story appropriately?

And that’s where we get into the quant qual quant qual type of pattern or cadence. So we do that together with them. if you’re in a very quantitatively driven organization where there’s massive fear around risk and uncertainty, you need to partner with the quants.

You’re not going to win a battle of my five users versus your 3000 balanced-sample study, backed by third-party research company. You want to actually take those things and say, “Which questions are each of these best at answering?” And what we find is that a lot of those really intense survey work surfaces, a lot more questions that we can get at very deeply with one-on-ones or IDI is as they would call them in market research so that we can do this nice dance of back and forth and use the techniques for what they were built for. Use the right tool, to get the effect that you’re looking for. So it’s definitely a partnership. I don’t think it’s one or the other.

We can find a way through this together for sure.

Jesse: Beautiful.

Peter: Yeah, I’m imagining you as Dorothy and everyone else is one of the animals skipping down the yellow brick road, linking arms.

Jen: Thank you both so much. This is really fun.

Jesse: Jen, thank you so much.

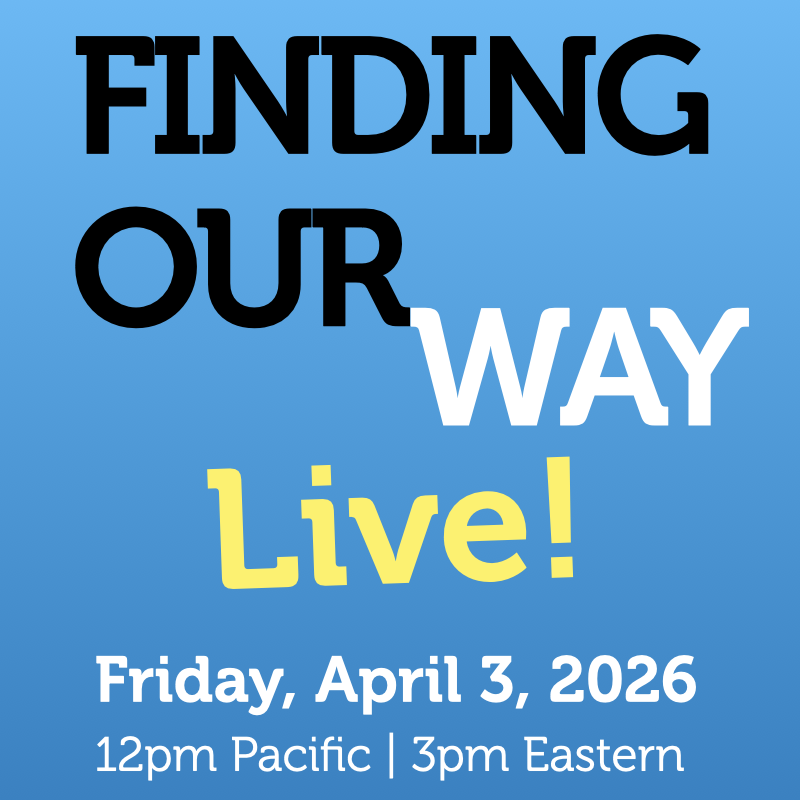

And that wraps up another episode of finding our way. You can find Jen Cardello on the internet. She’s Jen Cardello on LinkedIn. She’s at @jencardello on Twitter. You can find this podcast on the internet as well. You can find past episodes and transcripts on our website@findingourway.design. There’s also a feedback form there.

We love to get your feedback either through our website on LinkedIn or on Twitter. I’m @jjg. He’s @peterme. We’ll see you next time.

Leave a Reply